Modern Data Engineering and the Lost Art of Data Modelling

It wasn't long ago that the constraints imposed by finite storage and compute meant that data modelling was a necessity. Data modellers would carefully craft efficient representations of their data, all in the name of fitting it on their servers and ensuring their queries could run.

Today, these constraints are largely gone. Modern data warehouses are so powerful, flexible, and affordable, that data modelling often gets treated like a relic of the past. On the surface, this only seems like good news: teams can move quickly without having to agonise over schema designs, knowing that their budget and underlying infrastructure can handle it.

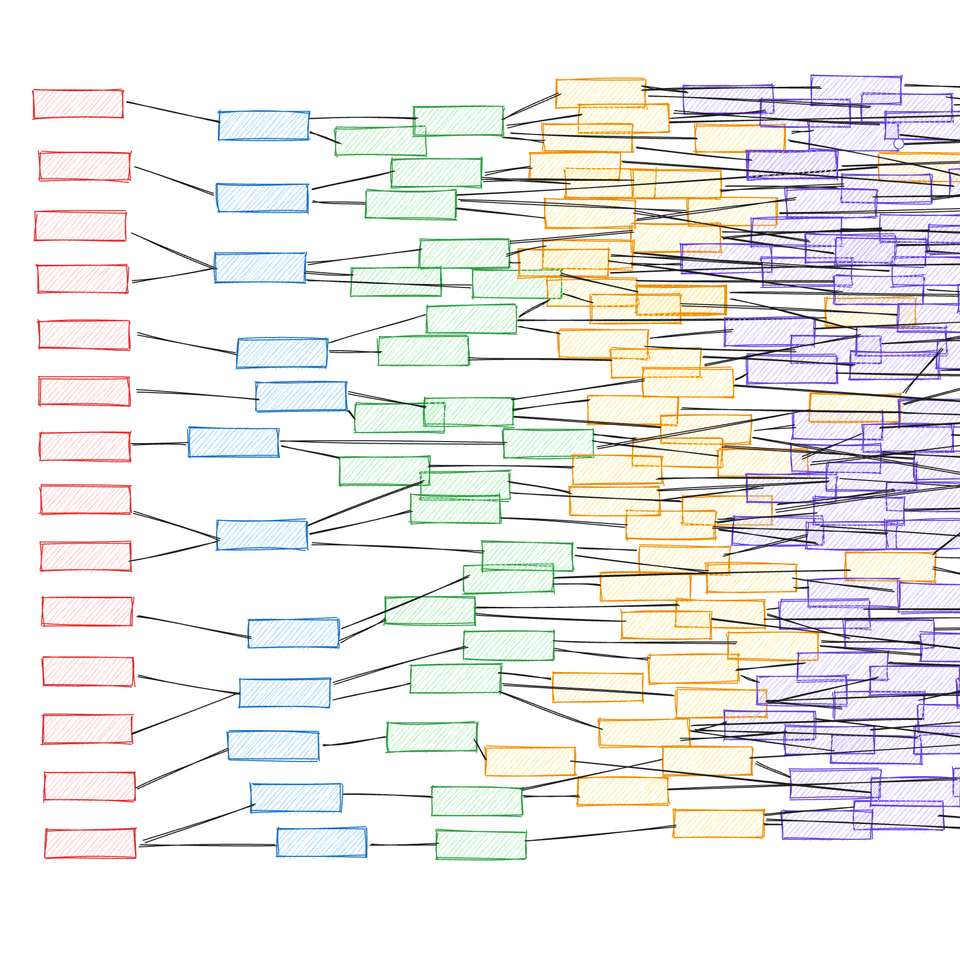

However, forgoing data modelling does come at a cost: sprawling data architectures emerge. The result is an incomprehensible, untrustworthy system that undermines insights and decision making.

What even is "data modelling"?

In its simplest form, the "what" of data modelling involves creating diagrams of data structures and the relationships between them.

Depending on the objective, data modelling is sometimes abstracted as one of three types: conceptual (for users), logical (for applications), or physical (for databases). These are often framed as stages in a three-step data modelling workflow, but in reality, this construction feels like an academic exercise that gets taught but rarely practiced.

Kimball and Inmon are undoubtedly the OGs who shaped the meaning of data modelling in its most traditional sense. Their writings on data warehouses and concepts such as facts, dimensions, and normalisation, form the canon for data modelling fundamentals that remain relevant to this day. They outline data modelling as not merely structuring data, but as a methodical process of designing reliable systems for analytics and decision-making.

A more modern interpretation, less beholden to its roots in analytics processing, would frame data modelling as an effort to accurately represent the reality of a business. Whereas in the past data modelling may have largely focussed on efficient storage and queries, today the aim should be for efficient cognition in the minds of the people who want to leverage the data. A suitably vague definition is to say that data modelling involves organising data in an intelligent way such that it yields useful information for you to reason over and build on.

It's creating this intuitive, actionable representation of data that engineers are quick to overlook today, failing to recognise its importance in the absence of cost and performance constraints acting as a forcing function.

How did things end up like this?

Every major cloud provider offers highly performant, infinitely scalable databases, at a low enough cost to make you feel crazy for considering any other option. Using these platforms, you can simply get away with a lot more bad data modelling than you can if you're maintaining your own infrastructure.

Unlike older technologies, modern cloud databases also offer powerful ways to represent complex and hierarchical data structures, such as BigQuery's nested and repeated fields. When used sensibly, these features open up new opportunities for convenient, denormalised data models. But in the wrong hands, they can also give rise to confusing and poorly structured schemas that would be better served by more conventional approaches.

More generally, modern cloud databases not only tolerate expansive, denormalised data models — they actively encourage it. The emergence of these columnar databases makes one big table (OBT) a legitimate option with numerous advantages over more traditional models such as star schemas. However, this can wrongly be seen as an excuse to just chuck everything into a single table, without proper consideration for how to meaningfully structure the data.

The availability and capabilities of modern data engineering infrastructure clearly play a role in data modelling's demise, but the tooling is also at fault. dbt — ostensibly a tool for data modelling — redefines a data model as essentially anything that looks like a SQL query. dbt removes the friction of creating and integrating these "models" into your data architecture, democratising data transformation so that almost anyone can do it.

But impulsively stacking SQL queries on top of each other just creates an analytical house of cards. The company of dbt itself normalises having 700, or even 1,700, such models in their internal projects. Victims of dbt working at large companies often speak of having 10,000+ models in their pipelines. dbt as a tool isn't really to blame — it's actually pretty neat — but the sprawling data architectures it encourages undermine efforts to do good data engineering.

On a different theme, data modelling doesn't fit as naturally with the popular agile development methodologies of today as it does with the waterfall approaches of yesteryear. Companies increasingly bias for speed of engineering over everything else, leaving little time for deliberate, upfront planning of data models that are likely to be invalidated by the changing priorities of later sprints.

Is data modelling dead?

While most prominent data companies seem to be pushing a fast and loose attitude towards data modelling, there are some that champion a more disciplined approach.

Looker's data modelling language, LookML, is an abstraction over SQL that defines reusable business logic and rules across analyses. This is a modern take on a decidedly old school modelling practice of explicitly creating a semantic layer. This stance differs from most business intelligence companies, which often sidestep complexities and disregard the more theoretical aspects of modelling data.

Once you see past the marketing jargon of ontology hydration and kinetic layers, Palantir's Foundry Ontology is fundamentally a rigorous, opinionated approach to object-oriented data modelling. This becomes a foundation for simulations and other advanced operational processes within the platform — things that would be a pipe dream for most data architectures that emerge organically. C3 AI hints at similar ideas, but with about ten times less substance and ten times more ✨ AI ✨.

Generally, it seems that every data company's SEO strategy includes having a vapid blog post on their website that pays lip service to star schemas, but says nothing compelling about data modelling. In truth, most of these tools aren't inherently pro or anti data modelling — it's all in the hands of the user.

Perhaps people are not doing data modelling simply because it's too difficult. A well-executed data model presents a seamless picture of complex properties and relationships, but designing one is much harder than it looks. It's no secret that data platform companies deploy engineers to their customers (at great expense), knowing that companies will butcher defining their own data models if left to their own devices.

Has deliberate data modelling become a luxury activity for a minority with the skills and resources? Have the advances in the underlying technology swung the balance such that the opportunity cost of data modelling is too high, when there are always other things to work on with more immediate impact?

Where do we go from here?

For readers who do still value in data modelling, and want to embrace it more in their work, I have a few points of advice:

- Learn the classic concepts and patterns. The fundamentals laid out by Kimball and Inmon are still relevant, even if highly normalised data models may not be optimal for many use cases today.

- The most useful form of data model in modern engineering is conceptual. Think hard about what the most coherent data structure is for your business or the problem you're trying to solve. This is especially important when initiating a project, but it should also be done continuously throughout its lifecycle. Maintain control over how your data model evolves over time.

- Critique your existing data architecture. Reimagine it as different data models — what would and wouldn't work for your typical workflows and querying patterns? What feels most natural to build on?

- Having a carefully constructed foundation to your data model whilst allowing for more relaxed development at the edges is a reasonable way to balance engineering rigor with velocity.

- Push back against short-termist thinking from stakeholders that may undermine data modelling efforts. Be the expert and convince them the ROI makes sense.

- Strive for simplicity. Avoid sprawl. Manage technical debt.

Happy modelling.

Member discussion